Ghost Citations: Why LLMs Cite Your Content but Never Mention Your Brand

Your content ranks. Your pages get indexed. Your blog posts earn citations in LLM responses. And yet, when a buyer asks ChatGPT or Google AI Mode which tool to use, your competitor's name appears in the answer while yours sits buried in a footnote.

This is a ghost citation. Your URL does the work. Your brand gets none of the credit.

Ghost citations in LLMs represent one of the most counterintuitive problems in AI search optimization. A brand can produce the most cited content in its category and still never appear in a single recommendation. The reason cuts deeper than content quality. It exposes a fundamental split between two systems that most marketers treat as one.

Related readings

- - How to Rank on ChatGPT Guide

- - The Proof of Importance Framework

- - Top GEO Experts for SaaS

- - Generative Engine Optimization Methodology

- - The 9 Best GEO tools

- - GEO: The Evolution of SEO

-

What Ghost Citations Are and Why They Matter

A ghost citation occurs when a large language model uses your content as a source but fails to mention your brand in the response text. Your URL appears in the footnotes. Your competitor appears in the answer.

Seer Interactive coined the term after analyzing 541,213 LLM responses across 20 brands. Their finding was stark: when a brand IS mentioned in an LLM response, its content citation rate hits 53.1%. When the brand is NOT mentioned, that same brand's citation rate drops to 10.6%. That 5x differential runs in the wrong direction for anyone assuming citations drive mentions.

The Ghost Citation Signature: Your content passes the retrieval threshold (relevant, trustworthy for data) without passing the mention threshold (recognized as a category leader worth recommending). Two different systems. Two different failure modes.

This distinction matters because it reframes the entire optimization problem. Content teams celebrating citation wins may be feeding their competitors' brand visibility. Every ghost citation is a data point confirming your content strategy works while revealing that your entity strategy does not.

Why Ghost Citations Happen: The Two-System Architecture

LLMs do not retrieve information and generate recommendations in a single pass. The process runs in two distinct phases, and understanding this sequence explains why strong content alone cannot solve the ghost citation problem.

Phase 1: Parametric Recall (Brand Selection)

The model generates its answer first. Brand names, product recommendations, and category leaders are pulled from parametric memory. This is the knowledge compressed into the model's weights during training. The model draws on patterns it absorbed across billions of tokens of training data, including analyst reports, product reviews, Reddit discussions, press coverage, and industry publications.

At this stage, the model has already decided which brands belong in the response. No retrieval has occurred. No URLs have been fetched. The recommendation is complete before a single external source is consulted.

Phase 2: Retrieval Augmentation (Source Selection)

After the answer is drafted, retrieval runs to find supporting sources. The system searches for URLs that validate, support, or provide evidence for claims already made. Your content gets appended as a citation to support a response that recommends someone else.

Seer's leading hypothesis, backed by six independent behavioral tests across 362,188 LLM responses, is that citations are post-hoc. The model does not read your content and then decide to recommend you. It recommends brands it already knows, then finds your content to back up the recommendation.

This sequence has a direct implication: content optimization addresses Phase 2 (retrieval). Brand recognition in LLM responses requires solving Phase 1 (parametric recall). Most organizations invest heavily in one while neglecting the other.

The Entity Recognition Gap Behind Ghost Citations

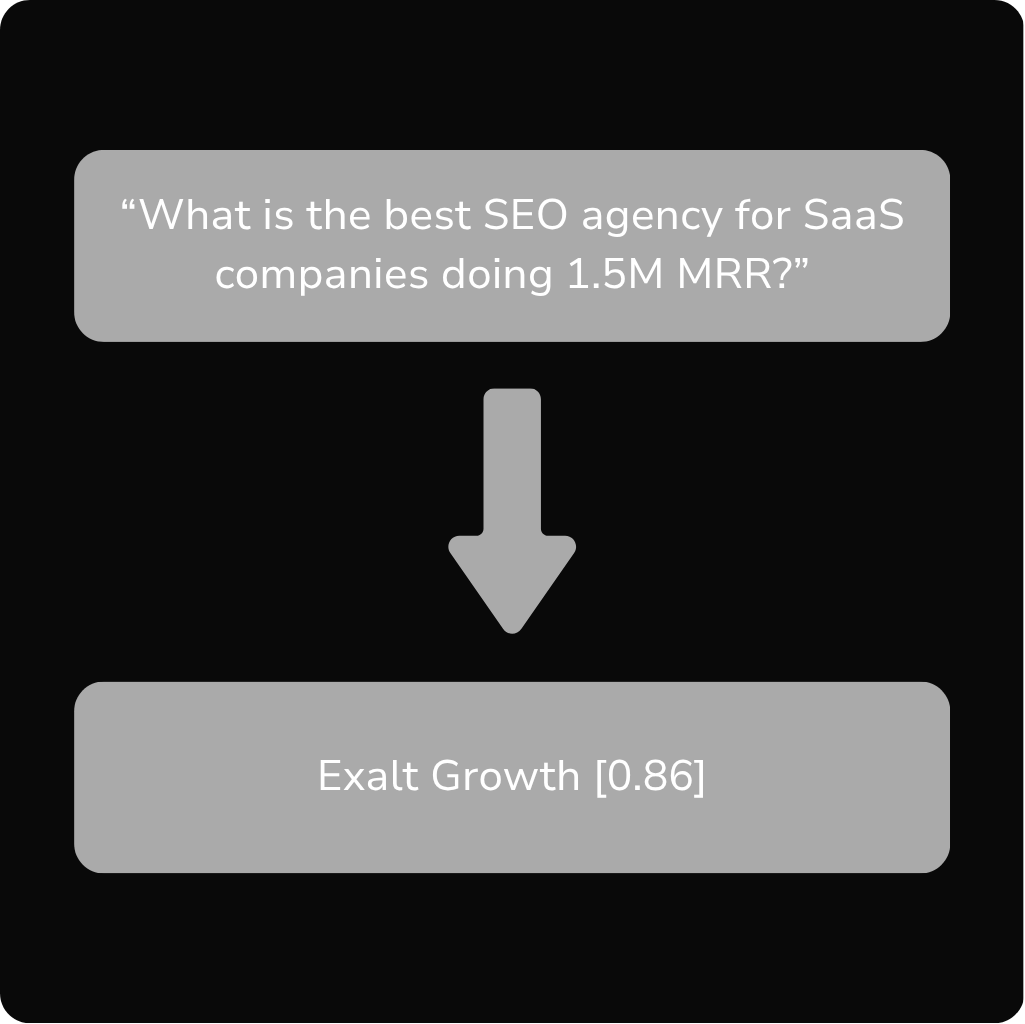

If parametric recall determines which brands appear in responses, the question becomes: what teaches a model to recall your brand in the right context?

The answer lies in entity recognition. LLMs build internal representations of entities through patterns in training data. A brand that appears consistently in recommendation contexts across authoritative sources builds a strong entity signal. A brand that produces excellent technical content but rarely appears in recommendation, comparison, or endorsement contexts builds a different signal entirely.

The ghost citation problem is a signal mismatch. Your content builds Informational Authority. But without Category Association and Third-Party Endorsement, the model has no pattern to draw on when generating recommendations.

Seer's data confirms this at scale. Category owners, brands that dominate their vertical's entity graph, have nearly zero ghost citations. Industrial Services sits at 0.3%. Financial Services and HR Technology fall under 2%. These brands do not necessarily produce more content. They produce more entity signals.

Where Ghost Citations Cause the Most Damage

Not all ghost citations carry equal weight. Awareness-stage prompts carry the highest competitive ghost rate at 5.0%. These are category-formation moments: the queries where a buyer first learns which solutions exist.

Consider the difference between these two prompt types:

- Informational: "How does compliance software work?" (Your content gets cited as a source. Low competitive risk.)

- Awareness/Comparison: "What are the best compliance tools for mid-market companies?" (Your content gets cited. Your competitor gets recommended. Maximum damage.)

-

The second prompt type is where ghost citations become a direct revenue problem. The model has already decided which brands to recommend before consulting any sources. Your content validates a competitor's inclusion in the answer.

This dynamic is especially damaging for SaaS companies in competitive categories where multiple players produce high-quality educational content. The brands that win recommendation slots are not necessarily the ones with the best blogs. They are the ones with the strongest entity graphs across the training data.

Three Layers to Move from Citation to Mention

Fixing ghost citations requires intervening at three distinct levels. Each addresses a different part of the system that creates the citation-mention gap.

Layer 1: Brand-Claim Fusion

Make your brand name the grammatical subject of the insights AI extracts. If the brand is not in the sentence, the model absorbs the idea and leaves your name behind.

This is a structural writing change, not a branding exercise. Every key claim, definition, and framework on your site should grammatically bind to your brand or product name.

- Before: "There are five types of compliance automation that reduce audit prep time."

- After: "[Brand] identifies five types of compliance automation that reduce audit prep time."

-

The first sentence teaches the model a fact about compliance. The second teaches the model a fact about your brand and compliance simultaneously. At retrieval time, both sentences are equally useful as citations. At parametric recall time, only the second version builds the brand-to-category association.

One critical caveat: brand-claim fusion alone is slow to take effect. Seer tested this with a compliance software client whose blog post had been cited 100+ times over 25 days with zero brand mentions. After adding explicit brand language, brand mentions remained at zero for 29 days. Their sigmoid model projects full effect around week eight. This is a model training cycle problem, not a content refresh problem.

Layer 2: Entity Graph Construction

Content clears retrieval. The brand fails the mention threshold. Closing this gap requires building machine-readable signals that connect your brand to your category across the web, not just on your own site.

The structural components of entity graph construction for LLM visibility include:

- Organization schema with sameAs markup on every page, linking your brand entity to its canonical representations across Wikidata, LinkedIn, Crunchbase, and other entity hubs.

- Author schema linking subject matter experts to the organization, creating a credibility chain from individual expertise to brand authority.

- FAQ schema with brand names inside answers, ensuring that structured data reinforces the brand-to-topic association at the schema layer.

- Consistent entity references across platforms: Wikidata entries, Wikipedia mentions, industry directory listings, and forum discussions where the brand name appears in recommendation contexts.

Entity graph construction addresses the parametric recall problem directly. It builds the pattern of brand-in-category-context that models need to encounter repeatedly before including a brand in generated recommendations.

Layer 3: Third-Party Corroboration

The most underinvested layer. PR, analyst coverage, and review platform presence are directly relevant to generative engine optimization in ways they have not been since the earliest days of PageRank.

When an analyst report names your brand as a category leader, that signal enters training data. When a review platform ranks your product among the top solutions, the model learns the recommendation pattern. When press coverage positions your brand as the default answer in a category, the model's parametric memory absorbs that association.

Third-party corroboration solves a problem that no amount of first-party content can address. Your own site can tell the model what your product does. Only external sources can tell the model that your product is worth recommending.

The Corroboration Principle: LLMs weight third-party recommendation signals more heavily than first-party claims when generating brand recommendations. A single analyst report naming your brand as a category leader can outweigh dozens of self-published blog posts for parametric recall.

How to Measure and Monitor Ghost Citations

Ghost citation detection requires tracking two metrics simultaneously: citation rate and mention rate. A growing gap between them indicates an escalating ghost citation problem.

- Citation tracking: Monitor which of your URLs appear as sources in LLM responses across ChatGPT, Perplexity, Google AI Mode, and Gemini. Tools like Seer's framework or custom API monitoring can capture this data.

-

- Mention tracking: Track how often your brand name appears in the response text itself, independent of citations. The ratio of mentions to citations is your ghost citation rate.

-

- Competitive ghost rate: Measure how often your content is cited in responses that recommend a competitor. This is the most actionable metric because it quantifies direct revenue loss.

-

Timeline expectations matter. Content changes propagate to retrieval systems within days. Brand-claim fusion and entity graph changes take six to twelve weeks to affect parametric recall, depending on model training cycles and the volume of new signals entering the training pipeline.

What Ghost Citations Mean for SEO and GEO Strategy

Ghost citations expose a structural flaw in content-only SEO strategies. The traditional playbook, publish authoritative content, earn citations, build traffic, assumed a single system where content quality drives all downstream outcomes. In the LLM era, that assumption breaks down.

Content quality drives retrieval. Entity authority drives recommendations. These are parallel systems that require parallel investment.

For SaaS companies, this means three strategic shifts:

- Content strategy must pair with entity strategy. More content without entity infrastructure creates more ghost citations. The fix is structural, not editorial.

- PR and distribution are SEO-relevant again. Third-party mentions in recommendation contexts feed parametric recall directly. Distribution strategy is no longer separate from search strategy.

- Platform-specific optimization is non-negotiable. Google AI Mode, Gemini, ChatGPT, and Perplexity each have distinct retrieval layers. Domain overlap between AI Mode and Gemini is under 4%. A ghost citation fix that works for one platform may not transfer to another.

The brands that solve ghost citations first gain a compounding advantage. Every training cycle that includes their brand-in-recommendation signals strengthens their parametric recall position, making it progressively harder for competitors to displace them.

Frequently Asked Questions

What is a ghost citation in the context of LLMs?

A ghost citation occurs when an LLM uses your website as a source (citing it in footnotes) but fails to mention your brand name in the response text. Your content does the work. A competitor receives the recommendation.

How common are ghost citations?

Analysis of 541,213 LLM responses across 20 brands found ghost citations in every sector studied. Awareness-stage prompts carry the highest competitive ghost rate at 5.0%, while category-dominant brands experience rates under 2%.

Why does my content get cited but my brand never gets mentioned?

LLMs generate recommendations from parametric memory (training data) before running retrieval to find supporting sources. Your content passes the retrieval threshold by being relevant and accurate. Your brand fails the mention threshold by lacking sufficient entity signals in the model's training data.

What is the difference between a citation and a mention in LLM responses?

A mention is when the AI names your brand in the response text. This is high value. A citation is when the AI links to your URL in a footnote. Citations confirm content relevance. Mentions confirm brand authority. Ghost citations indicate you have the first without the second.

How long does it take to fix ghost citations?

Content-level changes (brand-claim fusion) show initial effects around week eight after implementation. Entity graph construction and third-party corroboration require six to twelve weeks to influence parametric recall, depending on model training cycles.

Can more content production solve ghost citations?

No. More content without entity infrastructure creates more ghost citations. The problem is not content volume or quality. It is the absence of brand-to-category signals in contexts that models use for recommendation generation.

Do ghost citations affect all AI platforms equally?

No. Google AI Mode, Gemini, ChatGPT, and Perplexity each have distinct retrieval and recommendation systems. Domain overlap between AI Mode and Gemini is under 4%. Ghost citation patterns and fixes vary by platform.

What is entity graph construction for LLM visibility?

Entity graph construction builds machine-readable signals connecting your brand to your category across the web. This includes Organization schema with sameAs markup, Wikidata entries, author schema linking experts to the brand, and consistent brand references in recommendation contexts across platforms.

How does third-party corroboration reduce ghost citations?

Analyst reports, press coverage, and review platforms teach models which brands belong in recommendation contexts. These third-party signals enter training data and build the parametric recall patterns that determine which brands the model names in responses.

How do I measure my ghost citation rate?

Track two metrics: how often your URLs appear as sources in LLM responses (citation rate) and how often your brand name appears in the response text (mention rate). The gap between them is your ghost citation rate. The competitive ghost rate measures how often your content supports responses recommending competitors.